|

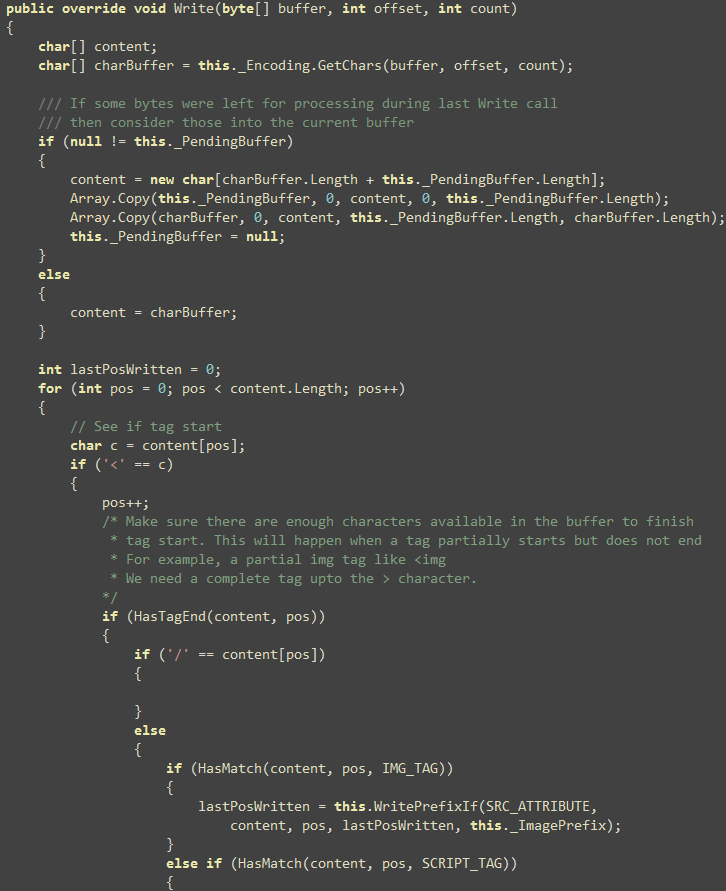

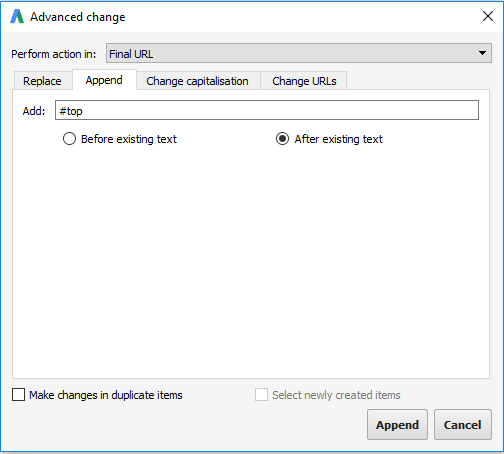

Using StreamWriter With Larger Buffer using (StreamWriter streamwriter = new StreamWriter(Path, true, Encoding.UTF8, 65536)) I still wouldn’t recommend this because it still has to write to the hard drive many times. This will perform much better than the previous 2 examples. In this code snippet, we are opening the file only once and then writing to it 10000 times. Using StreamWriter (Declared Before For Loop) using (StreamWriter streamwriter = File.AppendText(Path)) This will perform no better than when using “File.AppendAllText” because we are opening the file and writing to it 10000 times. In this example, we are basically doing the same thing as the previous example except we are utilizing the “Using” statement and then appending the line of text. Streamwriter.WriteLine("Some line of text") Using (StreamWriter streamwriter = File.AppendText(Path)) Using StreamWriter (Declared in For Loop) for (int i = 1 i < 10000 i ) This will turn out to be very slow because for every iteration of the for loop we are opening the file, appending some text, and then closing the file. In the example above, we see that the loop is going to write “Some line of text” to a text file 10,000 times. File.AppendAllText for (int i = 1 i < 10000 i )įile.AppendAllText(Path, "Some line of text") We need to loop a bunch of times and generate the lines of text from the loop. A situation that this is relevant is like the one in the introduction. The first scenario we are going to investigate is when we need to write lines generated by a loop. I decided to write this article to compare different ways to write text files and evaluate their performance. In fact, some methods are just plain terrible. I quickly realized that not all methods of writing files to the hard drive perform the same. I decided that this would be an excellent opportunity to try out some of my C# skills and write the content of the file using a loop. If you want things back into your list of lists, then you can do this: original_lists = for index, text, category in old_df_again.A few weeks ago I was tasked with creating a very large CSV file with some dynamic content. Read the data back from file: new_df = pd.read_csv(FILE)Īnd we can replace the Þ characters back to \n: new_df.text = new_df.(weird_char, '\n') I set index=False as you said you don't want row numbers to be in the CSV: FILE = '~/path/to/test_file.csv'Īnother newþline character,1 Getting the original data back from disk Now we write either of those identical dataframes to disk. The resulting dataframe looks like this, with newlines replaced: text category Putting the data into a dataframe, and using replace on the whole text column in one go df1 = pd.DataFrame(data, columns=)ĭf1.text = df.('\n', weird_char) replace('\n', weird_char) weird_char, sample] Using a list comprehension on your list of lists: data: new_data =. Here are the two ways that pop into my mind for achieving this: Now we use this weird character to replace '\n'. it basically allows you to pass the Name of a character, as per Unicode's specification.Īn alternative character that may serve as a good standard is '\u2063' (INVISIBLE SEPARATOR). That \N is pretty cool (and works in python 3.6 inside formatted strings too). Weird_literal = weird_name = weird_char # True With open('file.csv', 'w ', encoding='utf-8') as file:Ĭsvwriter = csv.DictWriter(file, field_names)Ĭsvwriter.writerow(' I want a CSV file, where the first line is "text,category" and every subsequent line is an entry from data.

This is a sample of the data I have: data = [ \n), where each data point is in one line? Sample data

My question is: what are ways I can store strings in a CSV that contain newline characters (i.e.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed